By John Patzakis

A model eDiscovery order I recently came across from a federal district court issued by a respected judge included a provision requiring parties to de-NIST their files in the course of eDiscovery production. On its face, this may seem like a reasonable technical requirement to some practitioners. But this provision reflects a fundamental misunderstanding of how proportional, targeted eDiscovery collection should work — and it points to a broader problem in our industry that deserves some attention.

For those unfamiliar with the term, de-NISTing refers to the process of filtering out known, irrelevant system files from a forensic collection using the National Institute of Standards and Technology’s reference database of known file signatures. The NIST database catalogs hundreds of thousands of known operating system files, executables, DLL files, and other system-generated data that have no evidentiary value whatsoever. De-NISTing removes these files from a collection so that reviewers are not burdened with wading through mountains of irrelevant system data. The reason you need to de-NIST in the first place is because you collected a full-disk image — capturing everything on the drive, relevant or not.

And that is precisely the problem with requiring de-NISTing in a model eDiscovery order. As I have written extensively, including in our recent white paper on proportionality in eDiscovery, courts have consistently held that full-disk imaging is not the appropriate default for civil litigation collections. Going all the way back to Deipenhorst v. City of Battle Creek in 2006, courts have warned that imaging a hard drive results in the production of massive amounts of irrelevant — and potentially privileged — information. More recently, in Motorola Solutions v. Hytera Communications Corp., the court emphasized that forensic examination of a party’s computers “is no routine matter” and that courts must use caution to avoid unduly impinging on privacy interests. A model order that presupposes full-disk imaging by requiring de-NISTing is, at minimum, inconsistent with this well-established body of case law.

The 2015 amendments to Federal Rule of Civil Procedure 26(b)(1) established a clear six-pronged proportionality framework for eDiscovery, requiring parties and courts to weigh factors including the importance of the issues at stake, the amount in controversy, the parties’ resources, and whether the burden or expense of proposed discovery outweighs its likely benefits. Courts have taken these amendments seriously and have consistently limited overbroad discovery requests on proportionality grounds. A blanket model order requirement to de-NIST implicitly endorses a collect-everything methodology that runs counter to the proportionality principles embedded in Rule 26(b)(1) and the extensive case law that has developed around it.

So how does a provision like this end up in a model court order? The answer, I believe, lies in the undue influence that certain eDiscovery service providers have had on collection practices and, ultimately, on the drafting of court orders and guidelines. Some service providers have a clear financial incentive to collect as much data as possible, since their fees are calculated on a per-gigabyte basis — meaning the more data collected, processed, and hosted, the higher the bill. This volume-based business model has shaped industry “best practices” in ways that favor over-collection, and that mindset has quietly seeped into the thinking of some federal judges and the model orders they issue. What gets dressed up as technical diligence is, in many cases, simply an artifact of a business model that profits from excess.

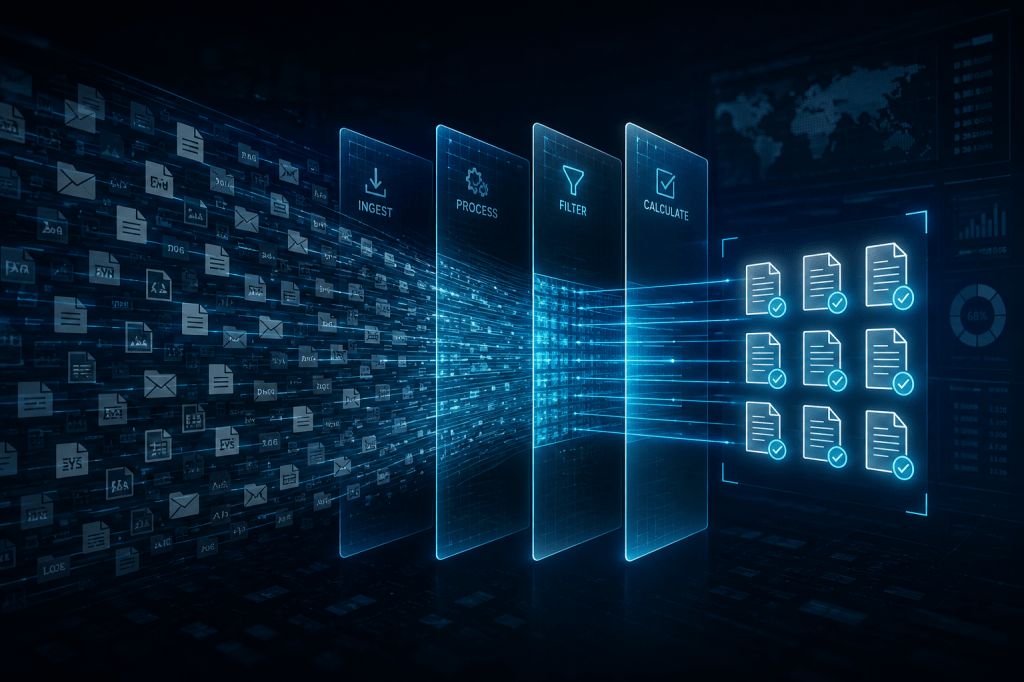

If you are conducting a properly scoped, targeted eDiscovery collection that is consistent with the principles of proportionality — as the Federal Rules and overwhelming case law require — there is simply no reason to de-NIST. A targeted collection does not reach system files, executables, DLLs, or other non-user-generated data in the first place. You are collecting potentially relevant ESI from identified custodians, scoped by search terms, date ranges, file types, and data sources. You never touch the data that de-NISTing is designed to filter out, which means the entire de-NISTing step — and its associated cost and processing time — is unnecessary overhead born entirely of an overbroad collection methodology.

This is precisely the approach built into X1 Enterprise, which enables legal and IT teams to conduct targeted, remote collections across large numbers of custodians without ever capturing the system-level data that necessitates de-NISTing. X1 Enterprise collects only the user-generated, potentially relevant ESI within defined parameters, preserving full metadata integrity and maintaining a documented chain of custody — satisfying every requirement for forensic soundness without the bloat, expense, and proportionality concerns of full-disk imaging. In an era where courts are increasingly scrutinizing eDiscovery costs and demanding proportionality, practitioners and judges alike should be asking not how to manage the mess created by over-collection, but how to avoid creating that mess in the first place.